History of the Computer Learn How It Changed Life and Work

Published: 29 Jan 2026

Have you ever asked yourself how computers evolved from simple counting tools to the powerful machines we use today? The history of the computer is full of inventions, discoveries, and clever minds that changed the world. From Charles Babbage’s analytical engine to Alan Turing’s theoretical machines, and then to modern laptops and smartphones, every step mattered.

I have explored this journey in detail, and I can tell you that knowing this history helps anyone understand computers better, solve problems faster, and feel confident using technology in daily life.

Let’s dig deeper into the article and understand each part step by step.

Evolution of Computers

The evolution of computers explains how technology changed from simple calculating tools to advanced digital machines. Early computers could only handle basic tasks, but new inventions improved their speed and accuracy over time. Scientists and engineers played a key role in shaping modern computers through constant innovation.

As technology progressed, computers became smaller, faster, and easier to use. Today, computers support daily activities such as work, education, communication, and entertainment. This evolution shows how human creativity transformed computers into an essential part of modern life.

Early Counting Devices (Pre‑Computer Era)

Before modern computers existed, people needed simple ways to count, calculate, and keep records. Early counting devices helped humans manage trade, farming, and daily transactions. These tools reduced mental effort and improved accuracy in basic calculations. They also laid the foundation for later developments in computing.

Now, let’s explore the early counting devices that shaped the beginning of computing.

- Abacus (around 3000 BCE)

- Tally Marks (around 30,000 BCE)

- Counting Stones and Beads (around 4000 BCE)

- Napier’s Bones (1617)

- Slide Rule (1622)

1. Abacus (around 3000 BCE)

The abacus appeared when societies needed a reliable way to manage numbers in daily life. People used it to keep records and solve problems without writing figures on paper. Its design allowed users to move parts by hand to represent values clearly. Different cultures adapted it according to their needs and traditions. This tool remained useful for centuries because it worked without power or complex systems.

- Portable frame with sliding beads

- Built from wood, metal, and stone

- Used across Asia, Africa, and Europe

- Supports mental math training skills

2. Tally Marks (around 30,000 BCE)

Tally marks are one of the first ways humans recorded numbers. People used scratches or lines on bones, wood, or stones to keep track of items, time, or events. This method helped organize information long before writing systems existed.

Different cultures developed their own tally systems, showing that counting was a universal need. Tally marks were simple but effective, allowing early humans to communicate numerical ideas. They became the foundation for more advanced counting tools in later eras.

- Found in prehistoric caves in Europe

- Marked groups of animals were hunted

- Tracked moon cycles and seasons

- Recorded trade items and quantities

3. Counting Stones and Beads (around 4000 BCE)

Counting stones and beads became an important tool as societies grew and trade expanded. People used small objects to represent numbers and perform calculations physically. This method helped track possessions, goods, and even time for daily activities.

Different communities created their own bead systems, showing creativity in solving counting problems. These tools allowed people to visualize quantities and manage tasks more efficiently. Over time, they influenced later devices for calculation and record keeping.

- Used in marketplaces for transactions

- Arranged in patterns for calculations

- Made from clay wood shells

- Helped teach children basic math

4. Napier’s Bones (1617)

Napier’s Bones was a breakthrough tool for multiplying and dividing numbers quickly. John Napier invented it to simplify complex arithmetic for merchants, scientists, and students. The tool used rods with numbers inscribed on them, allowing calculations to be done with minimal effort.

It made long multiplication and division faster and more accurate than traditional methods. Napier’s Bones spread across Europe and influenced later mechanical calculators. This device showed how clever design could transform everyday mathematical tasks.

- Rods made from bone or wood

- Simplified multiplication for merchants

- Used a grid system for division

- Influenced early mechanical calculator designs

5. Slide Rule (1622)

The slide rule became a powerful tool for engineers, scientists, and students to perform calculations efficiently. Invented in 1622, it used moving scales to handle multiplication, division, and roots quickly. People carried it for work in navigation, architecture, and astronomy.

Slide rules were portable and durable, making them practical for long projects and travel. They stayed in use for hundreds of years before electronic calculators became common. The device taught users to understand numbers visually and estimate results effectively.

- First commercially produced in England

- Scaled versions varied by manufacturer

- Used in early rocket calculations

- Essential in university engineering courses

Mechanical Calculators (17th–19th Century)

Mechanical calculators marked a major step in the evolution of computing. Inventors created machines that could perform addition, subtraction, multiplication, and division automatically. These devices helped merchants, engineers, and scientists save time and reduce errors. Over two centuries, mechanical calculators became more advanced, paving the way for modern computing machines.

Now, let’s explore some important mechanical calculators that shaped this era.

- Pascal’s Calculator (1642)

- Leibniz’s Step Reckoner (1673)

- Arithmometer (1820)

- Comptometer (1887)

- Difference Engine (1822)

Let’s take a closer look at the most important mechanical calculators from this era.

1. Pascal’s Calculator (1642)

Pascal’s calculator was one of the first mechanical devices designed to perform arithmetic automatically. Blaise Pascal invented it to help his father with tax calculations and financial records. The machine could add and subtract numbers using a system of gears and wheels. It reduced errors and saved significant time compared to manual calculations.

Although limited to basic operations, it inspired future inventors to create more advanced calculating machines. Pascal’s work demonstrated the potential of mechanical computation and the value of innovation in daily tasks.

- Operated with rotating numbered wheels

- Could handle numbers up to sixteen

- Made primarily from brass and steel

- Displayed results through a small window

2. Leibniz’s Step Reckoner (1673)

Leibniz’s Step Reckoner introduced new ways to perform arithmetic mechanically. Gottfried Wilhelm Leibniz created it to handle addition, subtraction, multiplication, and division automatically. Its stepped drum system allowed calculations that earlier devices could not manage.

Traders and engineers relied on it to solve complex problems more efficiently. The machine was delicate but powerful, showing how precise engineering could simplify number work. Its design inspired future inventors to explore more advanced calculating tools.

- Featured innovative stepped drum design

- Performed all four arithmetic types

- Built with durable metal components

- Supported trade and engineering tasks

3. Arithmometer (1820)

The Arithmometer was the first mechanical calculator designed for regular commercial use. Invented by Charles Xavier Thomas, it could perform addition, subtraction, multiplication, and division quickly and reliably.

Businesses and offices adopted it to handle large amounts of numerical data. Its gear and lever system made it much faster than manual calculations. The Arithmometer was compact enough to be used on desks, improving efficiency in bookkeeping. It remained popular for decades, influencing the development of later calculating machines.

- Mass-produced across European countries

- Could perform complex financial calculations

- Inspired future industrial calculating devices

- Included durable metal and wood parts

4. Comptometer (1887)

The Comptometer was one of the first key-driven mechanical calculators. Dorr E. Felt invented it to simplify calculations for businesses and offices. Users pressed keys to enter numbers, which made arithmetic faster and more accurate.

It could handle addition, subtraction, multiplication, and division without resetting between operations. Multiple people could use it during busy workdays, increasing productivity. The Comptometer set a standard for mechanical computation before electronic machines arrived.

- Introduced a fully automatic carry system

- Produced with a durable cast metal frame

- Sold widely to government agencies

- Adapted for scientific measurement tasks

5. Difference Engine (1822)

The Difference Engine was an early mechanical calculator designed to compute polynomial functions automatically. Charles Babbage created it to reduce human errors in producing mathematical tables for navigation and engineering. The machine used a series of gears and wheels to perform repeated calculations precisely.

Although Babbage never completed a full working model in his lifetime, prototypes proved the design was sound. The Difference Engine inspired engineers and inventors to create more advanced calculating machines in the future. Its concept laid the foundation for modern programmable computers.

- Required hundreds of interlocking metal parts

- Could print results on paper sheets

- The design included an innovative carry-over mechanism

- Displayed numerical output with rotating drums

Rise of Electronic Computers (1930s–1940s)

During the 1930s and 1940s, computers began shifting from mechanical devices to electronic machines. Inventors used vacuum tubes and early circuits to process data much faster than previous mechanical calculators. These developments laid the groundwork for modern computing and influenced scientific, military, and business applications. The era marked the start of computers as powerful tools for complex problem-solving.

Now, let’s explore the key electronic computers of this period.

- Atanasoff-Berry Computer (ABC) (1937–1942)

- Colossus (1943–1945)

- ENIAC (1943–1946)

- EDVAC (Designed 1945, Operational 1949)

Now, we explore key computers from this period.

1. Atanasoff-Berry Computer (ABC) (1937–1942)

The Atanasoff-Berry Computer marked an important step toward modern computing. John Atanasoff and Clifford Berry built it to solve complex mathematical equations. The machine used electronic circuits instead of mechanical parts, which made calculations faster.

It introduced binary numbers rather than the decimal system used by earlier devices. Although it was not programmable, it proved that electronic computing could work. The ABC later inspired the design of future electronic computers.

2. Colossus (1943–1945)

Colossus was one of the first electronic machines used for practical work. British engineers developed it during World War II to help break encrypted messages. The system processed information at high speed by reading data from punched paper tape.

It relied on electronic valves, which made it much faster than earlier devices. Colossus did not store programs, but operators could adjust its settings for different tasks. Its success showed how electronics could handle large data problems efficiently.

3. ENIAC (1943–1946

ENIAC was the first large-scale electronic computer built for general use. Engineers in the United States created it to perform complex numerical calculations quickly. The machine used thousands of vacuum tubes to operate at speeds far beyond mechanical systems. Programmers changed their tasks by rewiring panels and switches. ENIAC proved that electronic computers could solve scientific and military problems efficiently.

4. EDVAC (Designed 1945, Operational 1949)

EDVAC introduced a new way to design computers by storing instructions inside memory. This approach reduced manual rewiring and made systems easier to control. The project helped separate data from the hardware setup. Engineers used binary numbers instead of decimal values for better efficiency. EDVAC shaped the structure of many future computer designs.

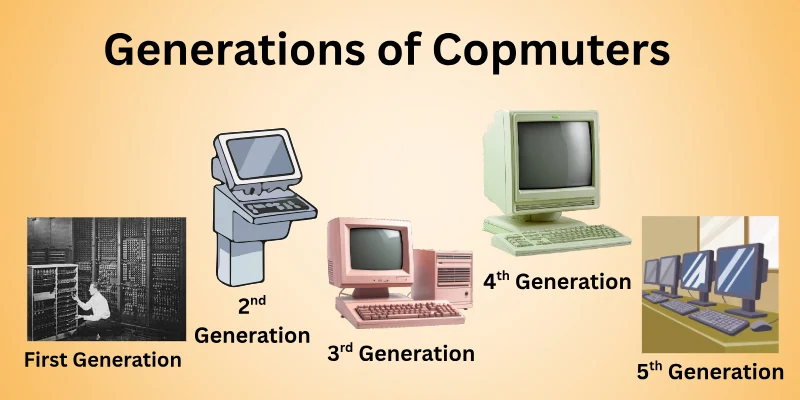

Generations of Computers

Computers did not change all at once. They evolved step by step as technology improved over time. Each generation introduced new components, better speed, and wider use in daily life. Understanding these generations helps us see how modern computers became so powerful.

Let us explore each generation one by one.

- First-Generation Computers (1940s to 1950s)

- Second Generation Computers (1950s to 1960s)

- Third Generation Computers (1960s to 1970s)

- Fourth Generation Computers (1970s to Present)

- Fifth Generation Computers (Present and Future)

Now, let’s take a closer look at each computer generation and its features.

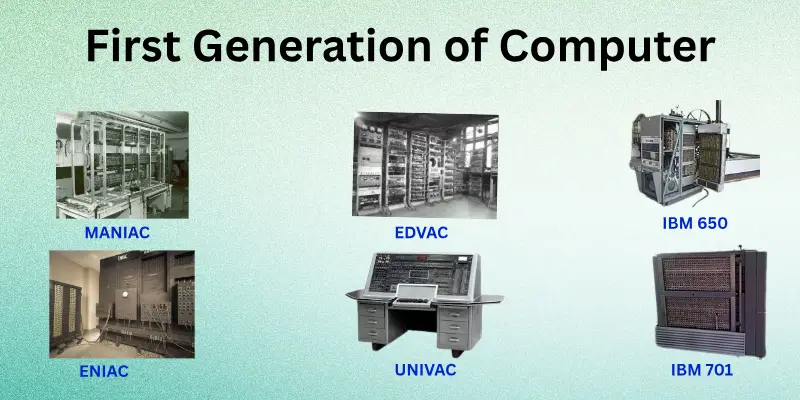

1. First-Generation Computers (1940s to 1950s)

The first generation of computers used vacuum tubes to process data. They were extremely large and consumed a lot of electricity. Programming was done using machine language, which required precise instructions. These computers were mainly used for scientific calculations and government tasks.

Features:

- Used vacuum tubes for circuitry

- Very large and heavy in size

- Generated excessive heat while working

- Programming required machine language knowledge

- Limited memory and storage capacity

- High electricity consumption

2. Second Generation Computers (1950s to 1960s)

The second generation of computers replaced vacuum tubes with transistors, which made machines more reliable. They could handle larger tasks and process information faster than first-generation computers.

Programming improved with the introduction of assembly language, allowing more flexibility in operations. These computers were widely used in universities, businesses, and government offices for calculations and data management.

Features:

- Could connect to magnetic tape storage

- Supported batch processing of tasks

- Used punched cards for input

- Allowed multiple programs in sequence

- Smaller cabinets compared to the first generation

- Required cooling systems for transistors

3. Third Generation Computers (1960s to 1970s)

The third generation of computers used integrated circuits, which allowed thousands of transistors to be placed on a single chip. These machines were smaller, faster, and more reliable than previous generations. Programming became easier with high-level languages like COBOL and FORTRAN. Computers started to be used in offices, research labs, and businesses for more complex calculations and data management.

Features:

- Could perform multiple tasks simultaneously

- Connected to disk storage systems

- Supported early computer networking

- Used printed circuit boards for circuits

- Offered better error detection methods

- Reduced maintenance compared to earlier machines

Fourth Generation Computers (1970s to Present)

Fourth-generation computers used microprocessors, which combined many functions on a single chip. They required less space and consumed much less electricity than earlier machines. People could use them for business, education, and entertainment at the same time. These computers introduced personal computers, workstations, and the first portable devices, changing how people interact with technology.

Features:

- Supported graphical user interfaces

- Connected to local and wide networks

- Used large-capacity hard drives for storage

- Enabled portable laptops and personal computers

- Allowed multitasking and simultaneous programs

- Integrated advanced input and output devices

Fifth Generation Computers (Present and Future)

Fifth-generation computers focus on advanced technology to perform intelligent tasks. They use high-speed processors and modern memory systems to handle large amounts of data efficiently. These computers are designed to understand natural language, recognize patterns, and assist with decision-making. They are widely used in research, robotics, automation, and complex simulations for various industries.

Features:

- Employ parallel processing for faster results

- Support voice and image recognition systems

- Use cloud computing for large storage

- Integrate with smart devices and robots

- Perform real-time data analysis

- Enable artificial intelligence applications

Personal Computer Revolution (1970s to 1980s)

During the 1970s and 1980s, computers became accessible to everyday people for the first time. Smaller machines allowed users to work, study, and create from their own desks. This period shifted computing from large organizations to individuals and small teams. Personal computers began to shape modern digital habits and home technology.

Now, let’s examine the systems behind this shift.

- Apple I (1976)

- Apple II (1977)

- IBM Personal Computer PC (1981)

- Commodore 64 (1982)

- Macintosh (1984)

Now, let’s go through these machines step by step.

1. Apple I (1976)

Apple I marked the beginning of personal computing for individual users. Steve Wozniak designed it as a simple circuit board that people could assemble at home. Unlike earlier machines, it targeted hobbyists who wanted direct control over their computers.

Users needed to add their own keyboard, display, and power supply. This early system helped prove that computers could belong on personal desks, not only in large institutions. It also encouraged early users to learn how hardware and software worked together. This hands-on experience inspired future innovation in personal computer design.

2. Apple II (1977)

Steve Wozniak designed the Apple II, and Steve Jobs helped bring it to the market. The machine came fully assembled, which removed the need for technical setup. Color graphics and sound made using the computer more engaging and fun. Schools and small businesses quickly adopted it for learning and record-keeping.

A wide range of software turned it into a flexible tool for different tasks. Developers began creating new programs and games for the system. A growing community of users shared tips, ideas, and solutions, helping the Apple II become even more popular.

3. IBM Personal Computer PC (1981)

IBM introduced the Personal Computer under the leadership of Don Estridge to bring reliable computing to offices and businesses. Users could run multiple applications for accounting, word processing, and data management.

The system used standardized hardware, which allowed third-party manufacturers to create compatible components. Its open design encouraged software development, making the PC more versatile. Businesses quickly adopted it because it was dependable and easy to maintain. A strong support network helped users troubleshoot and expand their systems.

4. Commodore 64 (1982)

The Commodore 64 was created by a team led by Jack Tramiel at Commodore International. This computer became widely popular for home use because of its affordability and versatility. It offered advanced graphics and sound for games, making it a favorite among young users.

People also used it for learning, programming, and small business tasks. A large library of software and peripherals expanded its capabilities. Fans and hobbyists formed communities to share programs, tips, and creative ideas.

5. Macintosh (1984)

Apple developed the Macintosh under the leadership of Steve Jobs to bring graphical user interfaces to personal computers. This machine allowed users to interact with icons and windows instead of typing commands. It included a built-in screen and mouse, making computing more intuitive for everyone.

Businesses, schools, and creative professionals quickly adopted it for design, writing, and education. Developers created specialized software that leveraged its graphical capabilities. A strong community of users and enthusiasts helped the Macintosh gain popularity and influence future computer designs.

Networking and the Internet (1990s–Present)

The 1990s marked a major shift as computers became connected worldwide through networks. The Internet allowed people to share information, communicate instantly, and access vast resources. Businesses, schools, and homes started relying on online systems for work, learning, and entertainment. Networking changed how individuals collaborate, making the world more connected than ever before.

Let’s explore the key technologies and milestones that shaped networking and the Internet.

- World Wide Web (1991)

- Email Expansion (1990s)

- Broadband and High-Speed Internet (2000s)

- Wireless Networking and Wi-Fi (2000s–Present)

- Cloud Computing and Online Services (2010s–Present)

Now, we explore the key technologies behind the Internet.

1. World Wide Web (1991)

The World Wide Web was invented by Tim Berners-Lee to make information accessible to everyone. It allowed people to view and share content through web pages using browsers. Websites began to appear, offering news, research, and entertainment in an easy-to-use format. The Web transformed how individuals, businesses, and institutions communicate and access knowledge worldwide.

Features:

- Uses hyperlinks to connect web pages

- Supports multimedia, including images and video

- Accessible through simple web browsers

- Enabled online publishing and sharing

2. Email Expansion (1990s)

During the 1990s, email became a common way to communicate quickly and efficiently. Businesses, schools, and individuals adopted it for daily messaging and document sharing. It reduced the need for letters and phone calls, saving time and resources. Email also paved the way for newsletters, marketing campaigns, and professional correspondence across the globe.

Features:

- Allowed attachments like documents

- Enabled multiple recipients at once

- Supported spam filtering and organization

- Accessible through early webmail platforms

3. Broadband and High-Speed Internet (2000s)

In the 2000s, broadband technology made Internet connections faster and more reliable. People could download files, stream videos, and access websites without long delays. Businesses started using high-speed Internet for online services, video conferencing, and cloud applications. This technology transformed everyday online activities, making the Internet an essential part of work, learning, and entertainment.

Features:

- Provided an always-on Internet connection

- Supported simultaneous multiple users

- Enabled streaming of high-quality media

- Reduced latency for online gaming

4. Wireless Networking and Wi-Fi (2000s–Present)

Wireless networking and Wi-Fi brought the freedom to connect without cables. Homes, offices, and public spaces started offering easy Internet access for laptops, phones, and tablets. Users could move around while staying online, which changed how people work and communicate. This technology also enabled smart devices, online collaboration, and seamless streaming in everyday life.

Features:

- Supports multiple devices simultaneously

- Works over secure wireless protocols

- Extends Internet access to public areas

- Allows connection of smart home gadgets

5. Cloud Computing and Online Services (2010s–Present)

Cloud computing transformed how people and businesses store and access data. Users can save files, run applications, and collaborate online without relying on local hardware. Companies started offering services like email, document editing, and data backup over the Internet. This shift made technology more flexible, scalable, and accessible for everyone, from students to large enterprises.

Features:

- Offers on-demand computing resources

- Supports remote collaboration across locations

- Provides automatic software updates

- Enables storage without physical devices

Modern and Future Computing

Computing technology continues to evolve at a rapid pace, changing how people live and work. Modern systems are faster, smaller, and more connected than ever before. Looking ahead, future computing promises even greater intelligence, efficiency, and new possibilities for everyone.

Modern Computing

Modern computing focuses on high-speed processors, powerful memory, and interconnected systems. Users rely on laptops, smartphones, and cloud platforms for daily work, education, and entertainment. Artificial intelligence, virtual reality, and big data tools are becoming standard in businesses and homes. Innovations in security, software, and network technologies make these systems more reliable and efficient.

Future Computing

Future computing aims to push technology beyond current limits. Quantum computers, advanced AI, and neural networks will solve complex problems much faster. Human-computer interaction will become more natural with voice, gesture, and even brain-controlled interfaces. These advancements will reshape industries, healthcare, research, and everyday life, opening possibilities that were once imagined only in science fiction.

Conclusion

In this guide, we have covered the history of the computer. Computers make tasks easier and faster, but they can also cause distractions or privacy concerns if not used carefully. We can manage these risks by practicing safe computing, balancing screen time, and staying aware of online security.

I sincerely thank you for reading this guide, and I hope it gave you useful insights. Don’t skip the next part of the FAQs. I hope you will find something more interesting, so don’t miss it. If you miss it, you may lose something new.

FAQS:

Here you will find common questions about the history of the computer. The answers will make this topic clear and easy for anyone starting out.

The first true mechanical computer is often credited to Charles Babbage, who designed the Analytical Engine in the 19th century. Later, machines like the ENIAC became the first electronic computers. These inventions laid the foundation for modern computing. Babbage is often called the “father of the computer.

Early computers were used for calculations in mathematics, astronomy, and business. Devices like the abacus, Napier’s bones, and mechanical calculators helped speed up calculations. Governments and companies used early computers for accounting and scientific research. They were not used for entertainment or personal tasks.

Electronic computers began in the 1930s and 1940s with machines like the ENIAC, Colossus, and Atanasoff-Berry Computer. They used vacuum tubes to perform calculations much faster than mechanical machines. These early computers handled large-scale data processing for military and scientific purposes. They marked the beginning of modern computing.

Personal computers like the Apple I, Apple II, and IBM PC brought computing into homes and small businesses in the 1970s and 1980s. They made technology accessible to individuals, not just organizations. This revolution led to software development, gaming, and daily digital tasks. PCs created a new era of personal computing.

The first widely recognized personal computer was the Altair 8800, released in 1975. Soon after, the Apple I (1976) and Apple II (1977) popularized home computing. These innovations made computing accessible to individuals and small businesses. They shaped the modern PC revolution.

Modern computers are fast, portable, and connected to the Internet. They support AI, big data, virtual reality, and cloud computing. These systems continue to revolutionize work, learning, and entertainment. They represent the most advanced stage in the history of computing.

Alan Turing is considered a pioneer in computing. He developed the Turing Machine, which became the foundation for modern computer algorithms. During World War II, he helped crack codes using early computers like the Colossus. His work influenced both theoretical and practical computer development.

Vacuum tubes powered first-generation computers, allowing them to perform calculations electronically. They were faster than mechanical parts but consumed a lot of energy and generated heat. This innovation made electronic computing possible and set the stage for transistor-based machines.

Supercomputers process data extremely fast and handle complex calculations. Machines like Cray-1 were used in scientific research, weather prediction, and simulations. Supercomputers showcase the peak of computing performance and advanced design. They highlight technological growth in computer history.

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks

- Be Respectful

- Stay Relevant

- Stay Positive

- True Feedback

- Encourage Discussion

- Avoid Spamming

- No Fake News

- Don't Copy-Paste

- No Personal Attacks